phpcms 网站路径百度帐号

目录

一、数组中元素的选取与修改

1.1 一维数组元素的选取与修改.py

1.2 二维数组元素的选取与修改.py

1.3三维数组元素的选取与修改.py

二、数组的组合和切割

2.1数组的组合

2.2数组的切割

三、numpy中数组的有关运算

3.1算数运算.py

3.2 numpy中矩阵的运算

3.3统计学用到的函数(数据预处理)

3.4numpy中的随机模块

3.4.1 随机数生成 (Random Number Generation)(random模块)

3.4.2 数组洗牌与采样 (Array Shuffling and Sampling)(shuffle()模块)

3.4.3随机模块与洗牌模块的核心区别对比

3.5数据的浅拷贝和深拷贝

四、numpy读取和写入文件

总结:NumPy在AI数据处理中的科学价值

结语:NumPy在AI数据处理的现代应用

引言

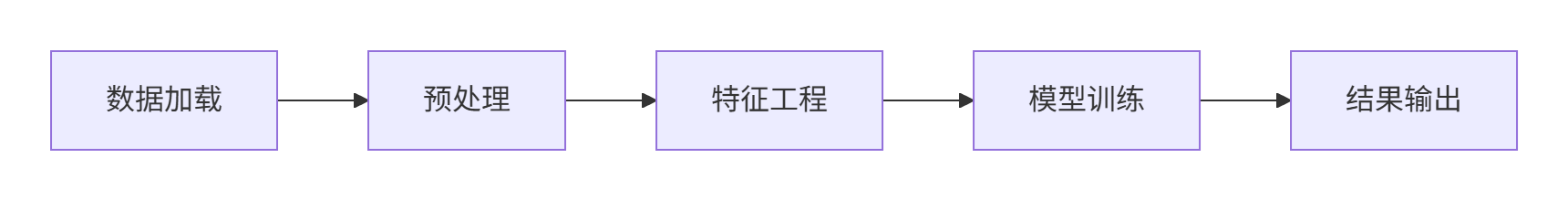

NumPy作为Python科学计算的核心库,在人工智能数据处理中起着举足轻重的作用。本章将深入探讨NumPy中的核心函数及其在AI数据处理中的具体应用,包括函数参数详解、使用场景分析以及最佳实践建议。

一、数组中元素的选取与修改

在AI数据处理中,元素选择和修改是数据预处理的基础操作。正确使用索引方法可以大大提高处理效率。

在AI项目中,这种能力可以用于:

-

特征工程:从原始数据中提取关键特征

-

数据清洗:修复或删除异常值

-

样本选择:创建平衡的数据集

-

数据增强:通过智能修改生成新样本

1.1 一维数组元素的选取与修改.py

import numpy as nparray1 = np.arange(1,9,1) # 创建1-8的数组(start, stop, step)

print(array1) # 输出数组# 基本索引操作

a = array1[1] # 索引位置1的元素(值为2)

b = array1[-1] # 负索引获取最后一个元素(值为8)# 高级索引操作

indices = [1,3,5]

c = array1[indices] # 列表索引获取多个不连续元素# 切片操作

d = array1[0:6:2] # 从0到5,步长为2(值为[1,3,5])

e = array1[::-1] # 反转数组(值为[8,7,6,5,4,3,2,1])# 布尔索引

mask = array1 > 5 # 创建布尔掩码

f = array1[mask] # 获取所有大于5的元素(值为[6,7,8])关键函数解析:

arange()函数:

numpy.arange(start, stop, step, dtype=None)- 参数详解:

start:序列起始值(可选,默认为0)stop:序列结束值(不包括该值)step:步长(可选,默认为1)dtype:输出数组的数据类型(可选)- 返回值:包含等差数列的ndarray

- 返回值:包含等差数列的ndarray

- AI应用场景:

- 创建时间序列数据

- 生成测试数据集

- 构建坐标网格

布尔索引在AI中的应用:

- 异常值检测和过滤

- 分类任务中的样本选择

- 特征工程中的条件筛选

1.2 二维数组元素的选取与修改.py

二维数组操作的科学意义:

在计算机视觉中,像素级别的操作能够:

- 改善图像质量(去噪、增强)

- 提取关键区域(ROI选择)

- 实现数据增强(像素变换)

import numpy as nparray1 = np.arange(1,17).reshape(4,4) # 创建4x4矩阵

print(array1)# 单元素访问

a = array1[1, 2] # 第1行第2列

b = array1[1][2] # 等效写法# 行选择

c = array1[2, :] # 第2行所有元素

d = array1[2] # 等效写法# 列选择

e = array1[:, 1] # 第1列所有元素# 子矩阵选择

f = array1[1:3, 1:3] # 行1-2,列1-2的子矩阵# 高级索引

rows = [0, 2]

cols = [1, 3]

g = array1[np.ix_(rows, cols)] # 生成索引网格函数参数详解:

reshape()函数:

ndarray.reshape(shape, order='C')- 参数详解:

shape:整数或整数元组,定义新形状order:{'C','F','A'},指定数据读取顺序- 'C':C风格顺序(行优先)

- 'F':Fortran风格顺序(列优先)

- 'A':保留原顺序

- 返回值:新形状的数组视图

- AI应用场景:

- 调整图像数据维度

- 转换时间序列格式

- 重组特征矩阵

np.ix_()函数:

- 功能:生成索引网格

- 参数:N个一维索引数组

- 返回值:N维索引数组元组

- AI应用:高级特征选择

1.3三维数组元素的选取与修改.py

在视频处理和3D数据分析中,三维索引技术支持:

-

时间序列分析:视频帧的时间维度访问

-

空间信息提取:点云数据的空间坐标访问

-

多维度特征融合:结合时间和空间信息

import numpy as np# 创建2x3x4三维数组

array3d = np.arange(24).reshape(2,3,4)

print(array3d)# 不同维度访问

a = array3d[1, 2, 3] # 第一维索引1,第二维索引2,第三维索引3

b = array3d[:, 1, 2] # 所有第一维,第二维索引1,第三维索引2

c = array3d[1, :, 2] # 第一维索引1,所有第二维,第三维索引2# 混合索引

d = array3d[1, [0,2], 1:3] # 第一维索引1,第二维索引0和2,第三维切片1-2# 步长控制

e = array3d[0, ::2, ::3] # 第一维索引0,第二维步长2,第三维步长3三维索引特点:

- 适用于视频数据、医学影像等多维数据

- 支持混合索引和切片操作

- 可同时控制多个维度的访问方式

在计算机视觉中的应用:

- 视频帧采样(时间维度步长控制)

- 特征金字塔提取(空间维度切片)

- 3D卷积神经网络输入处理

二、数组的组合和切割

数组组合和切割是数据处理流程中的核心操作,特别在数据集构建和特征工程阶段应用广泛。

其主要作用包括:

-

数据集构建:合并多个数据源

-

特征工程:创建复合特征

-

交叉验证:分割训练/验证集

2.1数组的组合

import numpy as nparray1 = np.eye(3) # 创建3x3单位矩阵

array2 = np.array([[1,2,3],[4,5,6],[7,8,9]])# 水平组合

h_stack = np.hstack((array1, array2)) # 水平拼接

concatenate_h = np.concatenate((array1, array2), axis=1) # 等效方法# 垂直组合

v_stack = np.vstack((array1, array2)) # 垂直拼接

concatenate_v = np.concatenate((array1, array2), axis=0) # 等效方法# 深度组合

d_stack = np.dstack((array1, array2)) # 深度方向拼接函数参数详解:

hstack()函数:

numpy.hstack(tup)- 参数详解:

tup:包含数组的序列(元组或列表)

- 要求:所有数组必须有相同的行数

- 返回值:水平拼接后的数组

vstack()函数:

numpy.vstack(tup)- 参数详解:

tup:包含数组的序列(元组或列表)

- 要求:所有数组必须有相同的列数

- 返回值:垂直拼接后的数组

concatenate()函数:

numpy.concatenate((a1, a2, ...), axis=0)- 参数详解:

a1, a2, ...:要连接的数组序列(必需)axis:沿哪个轴连接,默认0(垂直方向)(可选)

- 返回值:连接后的数组

- 特殊用法:

axis=0:垂直拼接(等效vstack)axis=1:水平拼接(等效hstack)axis=2:深度拼接(等效dstack)

在计算机视觉中的应用:

- 多摄像头画面拼接

- RGB-D数据融合(深度信息)

- 全景图像合成

在多模态AI中的价值:融合视觉、文本、声音等多源数据

2.2数组的切割

import numpy as nparray1 = np.random.rand(6, 8) # 创建6x8随机矩阵# 均等切割

h_splits = np.hsplit(array1, 2) # 水平切成2部分

v_splits = np.vsplit(array1, 3) # 垂直切成3部分# 非均等切割

sections = [2, 4, 2]

non_equal_split = np.array_split(array1, sections, axis=1) # 水平非均等切割# 指定切割位置

custom_split = np.split(array1, [2, 5], axis=0) # 在行索引2和5处切割切割函数详解:

split()函数:

numpy.split(ary, indices_or_sections, axis=0)- 参数详解:

ary:要分割的数组indices_or_sections:- 整数N:分割为N个相等部分

- 有序整数序列:指定切割点

axis:分割轴,默认为0

- 返回值:子数组列表

- AI应用场景:

- 交叉验证数据集划分

- 时间序列的分段处理

- 批量梯度下降的数据分块

array_split()函数:

numpy.array_split(ary, indices_or_sections, axis=0)- 特点:与split类似,但不要求等分

- 特殊能力:可以处理无法均等分割的情况

- AI应用:处理非均匀数据集划分

在自然语言处理中的应用:

- 文本段落切分

- 长序列分批处理

- 并行处理数据分区

三、numpy中数组的有关运算

高效的数值运算是NumPy的核心优势,特别适合AI中的矩阵运算和统计计算。

3.1算数运算.py

算术运算的技术价值:

-

激活函数计算:Sigmoid、ReLU等的向量化实现

-

损失函数计算:MSE、交叉熵等的高效计算

-

梯度更新:参数优化中的加减运算

import numpy as npa = np.array([1, 2, 3])

b = np.array([4, 5, 6])# 基础运算

add = np.add(a, b) # 加法 [5,7,9]

sub = np.subtract(a, b) # 减法 [-3,-3,-3]

mul = np.multiply(a, b) # 乘法 [4,10,18]

div = np.divide(b, a) # 除法 [4,2.5,2]

power = np.power(a, 2) # 幂运算 [1,4,9]# 三角函数

sin_val = np.sin(np.deg2rad(90)) # 正弦函数# 比较运算

greater = np.greater(a, b) # [False,False,False]

equal = np.equal(a, np.array([1,2,3])) # [True,True,True]# 逻辑运算

logical_and = np.logical_and(a > 1, a < 3) # [False,True,False]算术函数详解:

add()函数:

numpy.add(x1, x2, /, out=None, *, where=True)- 参数详解:

x1,x2:输入数组out:存储结果的数组(可选)where:布尔数组,定义哪些位置执行操作

- 广播规则:自动扩展到相同形状

- AI应用:梯度下降中的参数更新

三角函数:

sin()/cos()/tan():三角函数arcsin()/arccos()/arctan():反三角函数deg2rad():角度转弧度- 应用场景:计算机图形学、信号处理

比较和逻辑函数:

- 返回布尔数组,适合条件操作

- 应用:激活函数阈值判断

3.2 numpy中矩阵的运算

矩阵运算的科学意义:

-

神经网络基础:全连接层计算本质是矩阵乘法

-

注意力机制:QKV矩阵变换的核心运算

-

卷积优化:卷积操作通过矩阵乘法加速

import numpy as npA = np.array([[1,2],[3,4]])

B = np.array([[5,6],[7,8]])# 矩阵乘法

dot_product = np.dot(A, B) # 标准点乘

matmul = np.matmul(A, B) # 矩阵乘法函数# 行列式

det = np.linalg.det(A) # 计算行列式# 矩阵求逆

inv_A = np.linalg.inv(A) # 求逆矩阵# 伪逆矩阵

pinv_A = np.linalg.pinv(A) # Moore-Penrose伪逆# 特征值和特征向量

eigenvalues, eigenvectors = np.linalg.eig(A) # 特征值分解线性代数模块详解:

np.linalg.inv()函数:

numpy.linalg.inv(a)- 参数:

a:方阵数组 - 要求:矩阵必须可逆(行列式不为零)

- 应用:线性回归参数求解

np.linalg.pinv()函数:

numpy.linalg.pinv(a, rcond=1e-15)- 参数:

a:输入矩阵rcond:奇异值截断阈值

- 特点:适用于奇异矩阵或非方阵

- 应用:解决过约束线性系统

特征分解在AI中的应用:

- PCA降维算法

- 矩阵特征分析

- 谱聚类

3.3统计学用到的函数(数据预处理)

统计函数的数据科学应用:

-

数据标准化:z = (x - μ)/σ

-

特征筛选:方差阈值的特征选择

-

异常检测:3σ原则识别离群值

import numpy as npdata = np.random.randn(100,5) # 100个样本,5个特征# 基本统计量

mean_val = np.mean(data, axis=0) # 列均值

median_val = np.median(data, axis=0) # 列中位数

std_dev = np.std(data, axis=0) # 列标准差

variance = np.var(data, axis=0) # 列方差# 百分位数

percentile_25 = np.percentile(data, 25, axis=0) # 25%分位数

percentile_75 = np.percentile(data, 75, axis=0) # 75%分位数# 相关性分析

correlation = np.corrcoef(data, rowvar=False) # 计算相关系数矩阵# 直方图统计

hist, bins = np.histogram(data[:,0], bins=10) # 第一列的直方图统计函数详解:

np.percentile()函数:

numpy.percentile(a, q, axis=None)- 参数详解:

a:输入数组q:0-100之间的百分位数axis:计算轴

- 应用:数据分箱、异常值检测

np.corrcoef()函数:

numpy.corrcoef(x, y=None, rowvar=True)- 参数详解:

x:输入数组y:另一个输入数组(可选)rowvar:如果为True(默认),每行代表一个变量;False表示每列代表一个变量

- 返回值:相关系数矩阵

- 应用:特征相关性分析

在数据预处理中的作用:

- 特征工程

- 数据标准化

- 异常值检测

3.4numpy中的随机模块

3.4.1 随机数生成 (Random Number Generation)(random模块)

在AI中的应用:

-

权重初始化:打破模型对称性

-

Dropout:随机神经元丢弃

-

噪声注入:添加高斯噪声,提升模型鲁棒性

import numpy as np# 设置随机种子

np.random.seed(42)# 均匀分布

uniform_data = np.random.rand(100) # [0,1)均匀分布

randint_data = np.random.randint(0, 10, size=100) # [0,10)整数# 高斯分布

normal_data = np.random.normal(loc=0, scale=1, size=100) # 标准正态分布# 其他分布

exponential_data = np.random.exponential(scale=1.0, size=100) # 指数分布

binomial_data = np.random.binomial(n=10, p=0.5, size=100) # 二项分布随机模块详解:

np.random.seed()函数:

numpy.random.seed(seed=None)- 参数:整数种子值

- 作用:确保随机结果可重现

- 最佳实践:在每项实验开始时设置随机种子

np.random.normal()函数:

numpy.random.normal(loc=0.0, scale=1.0, size=None)- 参数详解:

loc:正态分布的均值(μ)(可选,默认0.0)scale:正态分布的标准差(σ)(可选,默认1.0)size:输出形状(可选,默认None)

- 应用:添加高斯噪声

在深度学习中的应用:

- 神经网络权重初始化

- 数据增强(随机裁剪、旋转)

- Dropout正则化

- 强化学习的探索策略

3.4.2 数组洗牌与采样 (Array Shuffling and Sampling)(shuffle()模块)

在机器学习中的关键应用:

-

数据集随机化:消除顺序偏差

-

mini-batch训练:随机样本选择

-

交叉验证:数据集随机分割

import numpy as np# 打乱序列

sequence = np.arange(10)

np.random.shuffle(sequence) # 打乱顺序# 选择子集

subset = np.random.choice(sequence, size=3, replace=False) # 不放回抽样# 随机排列

permutation = np.random.permutation(sequence) # 生成排列# 多变量分布

multivariate_normal = np.random.multivariate_normal(mean=[0, 0], cov=[[1, 0.5], [0.5, 1]], size=100

) # 多元正态分布高级随机函数:

np.random.shuffle()函数:

numpy.random.shuffle(x)- 参数x:要打乱的数组或列表(必需)

- 特点:原位操作(原地打乱),改变原数组顺序,无返回值

- 应用:训练数据集洗牌

np.random.multivariate_normal()函数:

numpy.random.multivariate_normal(mean, cov, size=None, check_valid='warn')- 参数详解:

mean:均值向量(N维)cov:协方差矩阵(N×N)size:输出样本数

- 应用:生成模拟相关特征

在生成式AI中的应用:

- GAN中的数据生成

- VAE中的潜空间采样

- 贝叶斯模型中的后验采样

3.4.3随机模块与洗牌模块的核心区别对比:

| 特性 | 随机模块 (Random Module) | 洗牌模块 (Shuffle Module) |

|---|---|---|

| 主要功能 | 生成随机数 | 重排现有序列 |

| 常用函数 | rand(), normal(), randint() | shuffle(), permutation(), choice() |

| 应用场景 | 初始化参数、噪声注入 | 数据集打乱、样本选择 |

| 可复现性 | 通过seed()控制 | 通过seed()控制 |

| 返回值 | 新随机数 | 原位修改或新排列 |

| 数据依赖 | 独立生成 | 依赖输入序列 |

| 在AI中的作用 | 模型初始化、数据增强 | 数据集准备、训练策略 |

3.5数据的浅拷贝和深拷贝

拷贝机制的内存科学:

-

浅拷贝:共享内存视图,内存开销O(1)

-

深拷贝:独立内存分配,内存开销O(n)

-

实际应用:

-

数据处理管道:浅拷贝节省内存

-

实验备份:深拷贝确保数据安全

-

大数组处理:视图减少内存占用

-

import numpy as np# 创建基本数组

orig = np.array([1, 2, 3])# 浅拷贝视图

view = orig[:] # 通过切片创建视图

view[0] = 100 # 修改会影响原数组# 深拷贝

deep_copy = orig.copy() # 创建副本

deep_copy[0] = 200 # 修改不会影响原数组# 使用np.copy()函数

explicit_copy = np.copy(orig) # 显式拷贝# 判断是否是视图

print(view.base is orig) # True

print(deep_copy.base is None) # False拷贝机制详解:

ndarray.copy()方法:

ndarray.copy(order='C')- 参数:

order:控制内存布局('C','F','A','K') - 特点:创建数组及其数据的完整副本

- 内存开销:与被拷贝数组相同

内存视图和副本的区别:

- 视图:共享原始数据缓冲区

- 副本:独立内存空间

- 判断方法:通过

base属性

在大型数据处理中的策略:

- 预处理流水线中使用视图节省内存

- 结果保存和模型训练中使用副本确保数据安全

- 使用

np.may_share_memory()检查数组共享情况

四、numpy读取和写入文件

高效的数据存储和读取(IO)是AI项目的关键环节,直接影响数据处理的效率,NumPy提供了多种文件操作函数。

import numpy as np# 基本文本读写

data = np.loadtxt('data.csv', delimiter=',', skiprows=1, dtype=np.float32)

np.savetxt('output.txt', data, fmt='%.4f', delimiter='\t')# 二进制文件操作

np.save('array_data.npy', data) # 保存为.npy格式

loaded = np.load('array_data.npy') # 加载.npy文件# 多个数组存储

np.savez('dataset.npz', X=data, y=labels) # 保存为.npz压缩文件

with np.load('dataset.npz') as archive:X = archive['X']y = archive['y']# 内存映射文件

large_data = np.memmap('large.dat', dtype=np.float32, mode='w+', shape=(10000, 100))文件IO函数详解:

np.loadtxt()函数:

numpy.loadtxt(fname, dtype=<class 'float'>, comments='#', delimiter=None, converters=None, skiprows=0, usecols=None, unpack=False, ndmin=0, encoding='bytes')- 关键参数详解:

fname:文件名或文件对象(必需)dtype:数据类型(可选,默认float)delimiter:分隔符(可选,默认None)skiprows:跳过的行数(如标题行)(可选,默认0)usecols:要读取的列索引序列(可选,默认为None)unpack:如果为True,则转置数组(默认为False)-

comments:注释标识符(可选,默认'#')

- 最佳实践:处理中等大小数据集(<1GB)

np.memmap()函数:

numpy.memmap(filename, dtype, mode, offset=0, shape=None, order='C')- 参数详解:

filename:文件名dtype:数据类型mode:文件模式('r','r+','w+','c')shape:数组形状order:内存布局('C'或'F')

- 特点:处理远大于内存的数据集

- 应用:大型图像数据库处理

在工业级AI系统中的实践:

-

TB级数据处理:使用

mmap内存映射 -

格式转换:将CSV转换为高性能.npy

-

增量学习:分块加载大数据集

文件格式比较:

| 格式 | 特点 | 适用场景 | 最大优势 |

|---|---|---|---|

| .npy | 高效二进制 | 单个数组存储 | 加载速度快 |

| .npz | 多数组压缩 | 数据集存储 | 节省空间 |

| .CSV | 可读性好 | 数据交换 | 通用性强 |

| HDF5 | 支持超大数据 | 大型项目 | 并行访问 |

不同文件格式的性能对比:

| 格式 | 读取速度 | 磁盘空间 | 数据类型支持 | 适用场景 |

|---|---|---|---|---|

| .npy | 极快 | 中等 | 丰富 | 中间结果存储 |

| .npz | 快 | 较小(压缩) | 丰富 | 数据集打包 |

| CSV | 慢 | 大 | 有限 | 数据交换 |

| HDF5 | 中等 | 较小 | 丰富 | 大型数据集 |

端到端数据处理流水线示例:

import numpy as npclass DataProcessor:def __init__(self, input_path):self.data = np.load(input_path, mmap_mode='r') # 内存映射加载大文件def preprocess(self):# 1. 缺失值处理cleaned = self.data[~np.isnan(self.data).any(axis=1)]# 2. 特征标准化means = np.mean(cleaned, axis=0)stds = np.std(cleaned, axis=0)normalized = (cleaned - means) / stds# 3. 特征选择variances = np.var(normalized, axis=0)selected = normalized[:, variances > 0.1] # 方差阈值return selecteddef save(self, output_path):np.save(output_path, self.processed_data) # 高效保存为二进制在深度学习中的数据管道:

# 构建高效数据加载器

class DataLoader:def __init__(self, file_pattern):self.files = sorted(glob.glob(file_pattern))def __iter__(self):for file in self.files:# 使用内存映射加载大型文件data = np.memmap(file, dtype=np.float32, mode='r', shape=(1000, 256))# 分批生成for i in range(0, len(data), 32):yield data[i:i+32] # 32个样本的批次总结:NumPy在AI数据处理中的科学价值

NumPy为AI数据处理提供了坚实的基础设施,其核心价值体现在:

随着AI模型复杂度增加,NumPy在数据处理中的基础地位更加凸显。掌握这些核心技术,将为您的AI项目提供强大的数据处理能力支持。

-

计算效率:

-

向量化操作比纯Python循环快10-100倍

-

内存映射支持TB级数据处理

-

多核并行加速计算

-

-

算法实现基础:

-

科学表达支持:

-

线性代数运算:矩阵分解、特征值计算

-

统计分布:概率密度函数实现

-

随机过程:蒙特卡洛模拟支持

-

-

工程实践价值:

-

数据处理流水线标准化

-

实验可复现性保障

-

内存效率优化

-

结语:NumPy在AI数据处理的现代应用

NumPy不仅是科学计算的基石,更是AI项目中数据处理的核心工具。通过本章的深入学习,我们掌握了:

-

核心操作能力:

- 灵活的元素索引和修改技术

- 高效的数据组合和分割方法

- 强大的数学统计运算功能

-

函数使用精要:

- 深入理解关键函数的参数和特性

- 掌握在不同场景下的最佳实践

- 学习函数组合使用的技巧

-

AI应用场景:

- 计算机视觉:图像处理、特征提取

- 自然语言处理:文本向量操作

- 强化学习:状态表示和转换

- 生成式AI:数据合成和转换

高级使用建议:

- 向量化优先原则:始终优先考虑向量化操作,避免Python循环

- 内存优化策略:

- 视图操作:处理大型数据集

- 数据类型:使用最小精度

np.float16,np.int8 - 内存映射:处理超大数据文件

- 可复现性保障:

- 设置随机种子

- 记录数据处理流水线

- 保存中间结果

未来发展趋势:

- 与深度学习框架(TensorFlow、PyTorch)的无缝集成

- GPU加速(通过CuPy等库)

- 分布式处理支持(Dask+NumPy)

掌握NumPy的高效数据处理能力,将为您的AI项目奠定坚实的基础,使您能够在日益复杂的数据环境中游刃有余,创造出更智能、更高效的解决方案。